SysAid is an enterprise ITSM platform that has grown entirely through direct sales for its whole history. Every new customer started with a sales conversation, a demo, and a procurement cycle. The product had never had to make a first impression on its own.

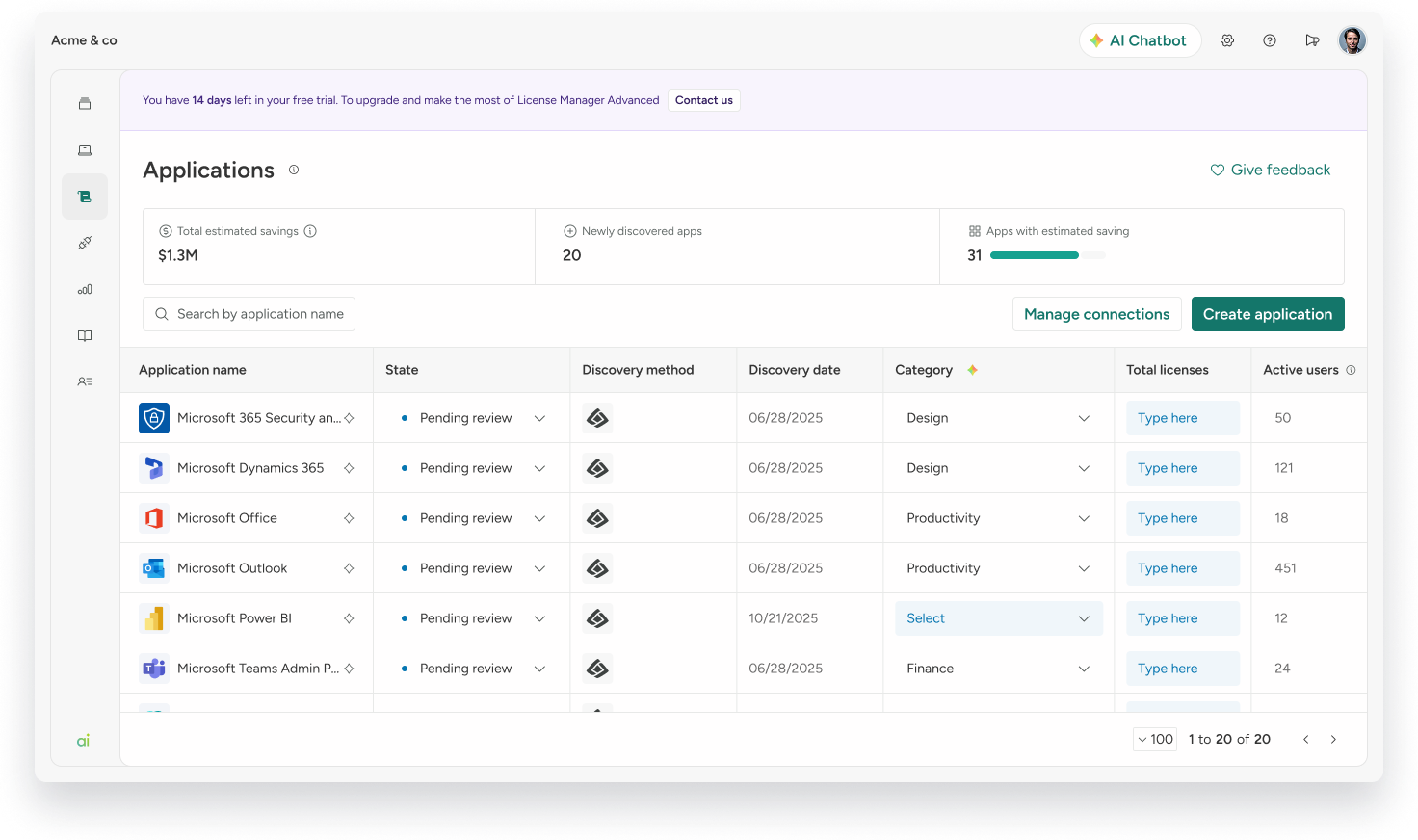

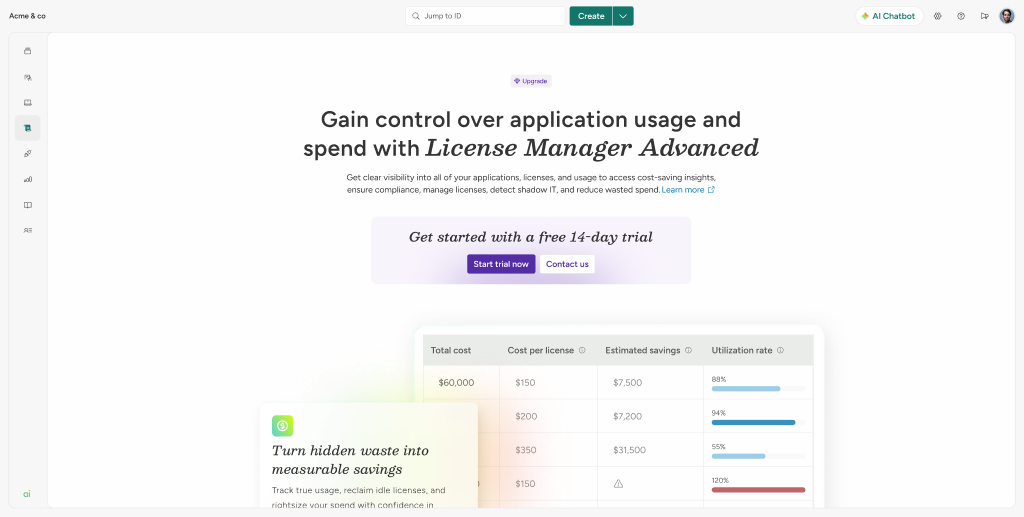

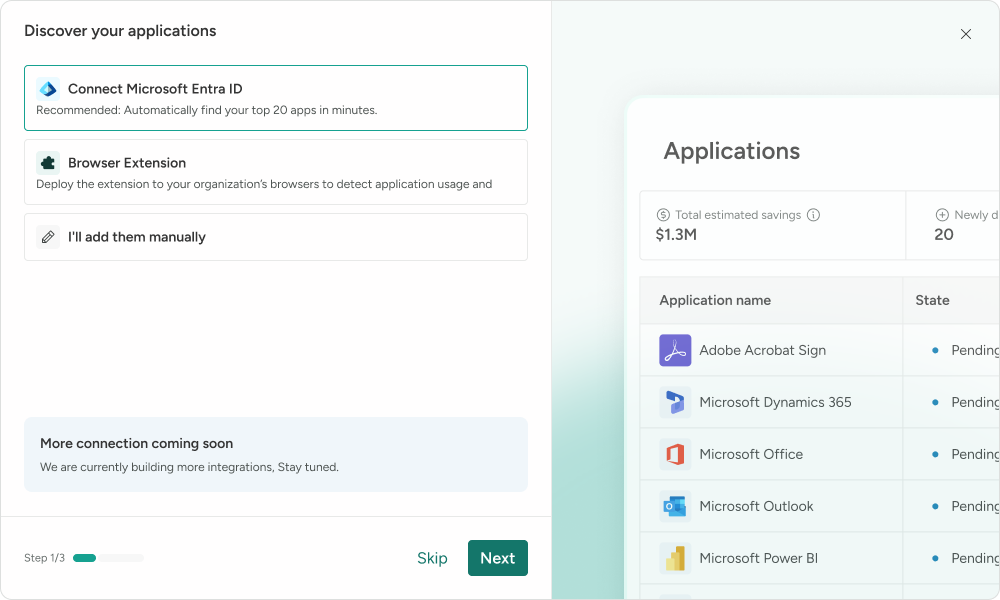

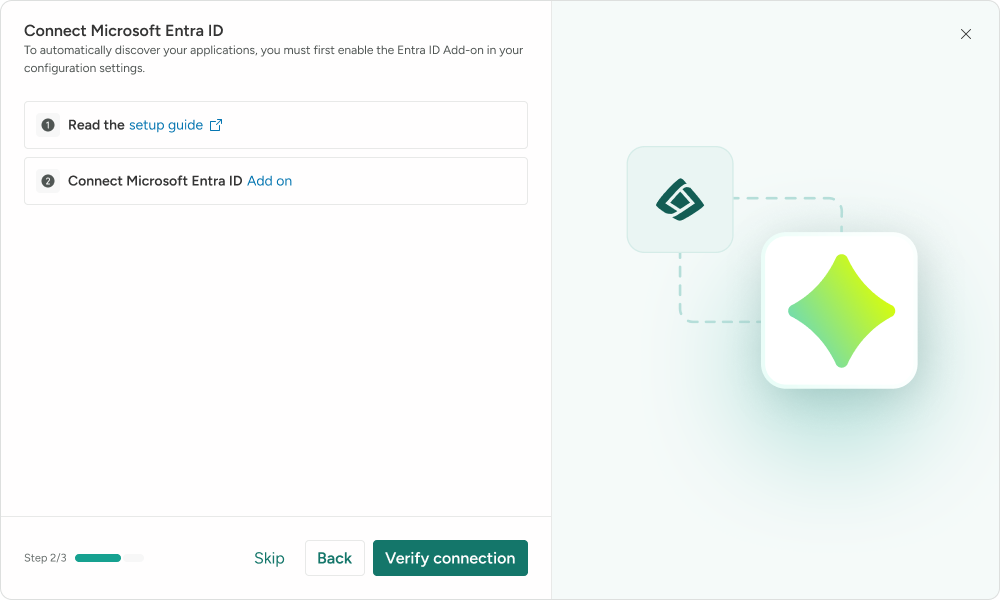

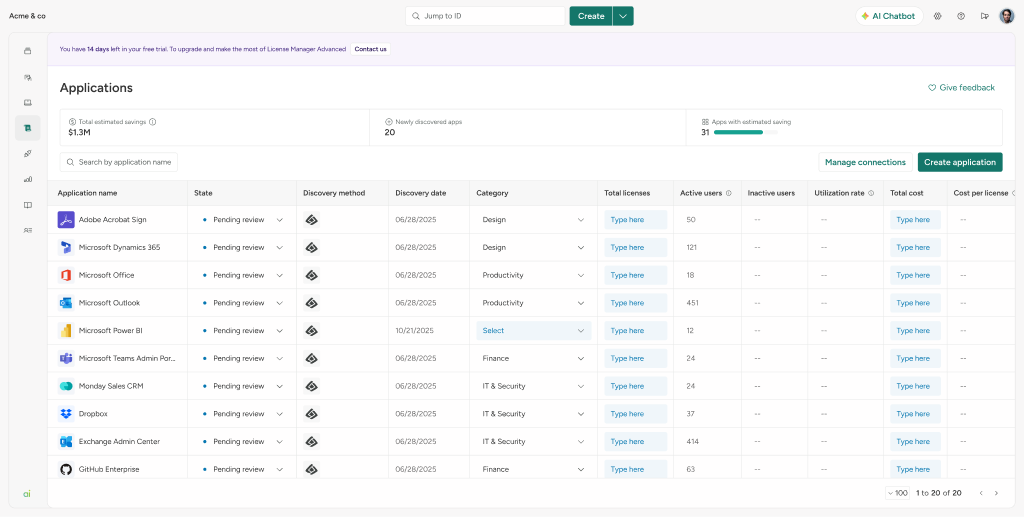

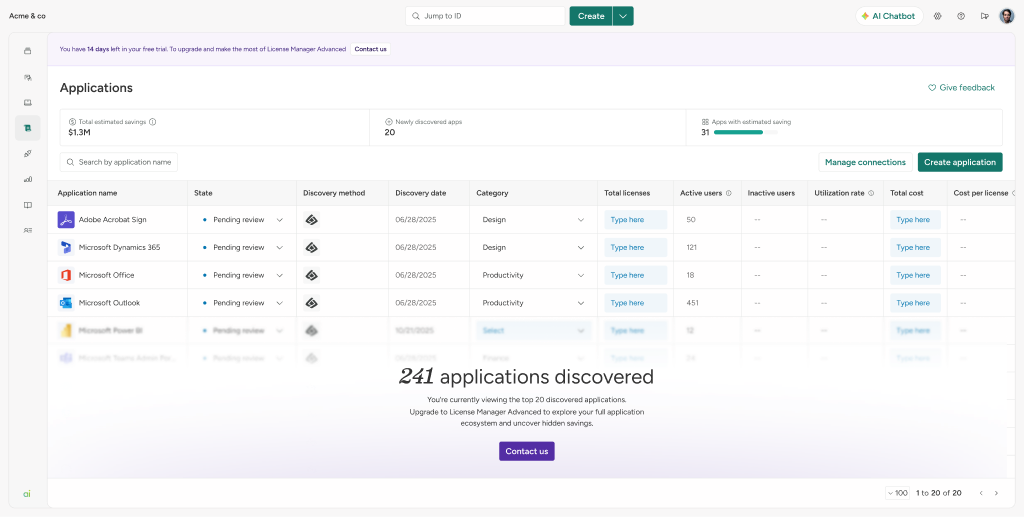

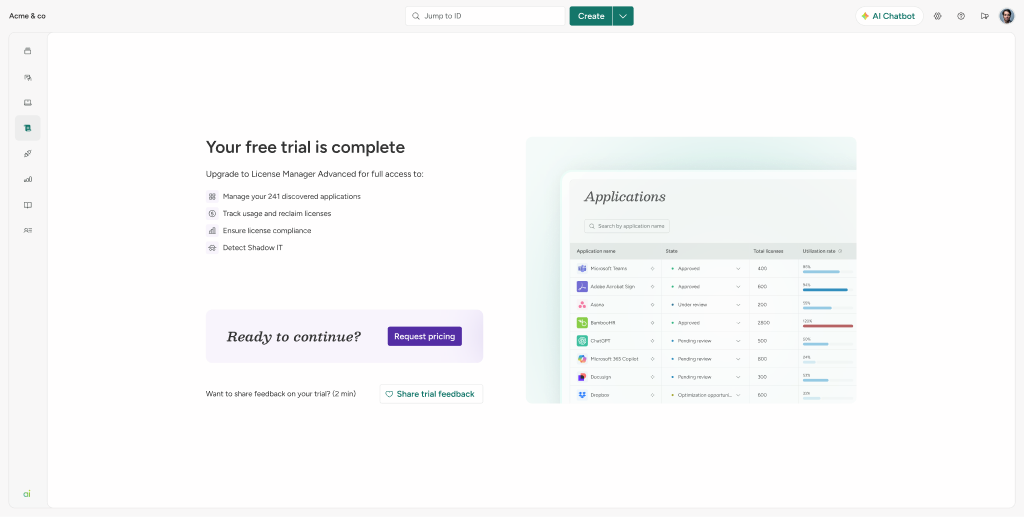

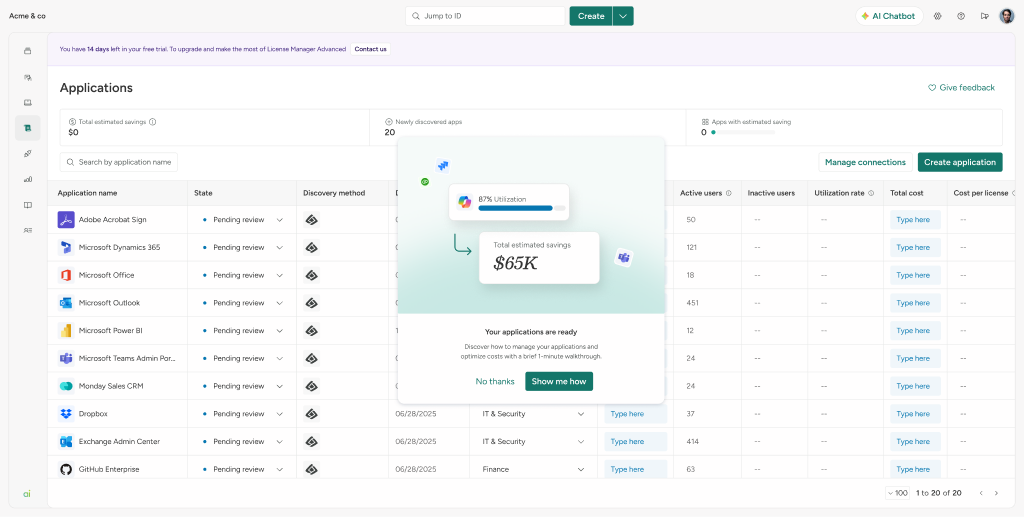

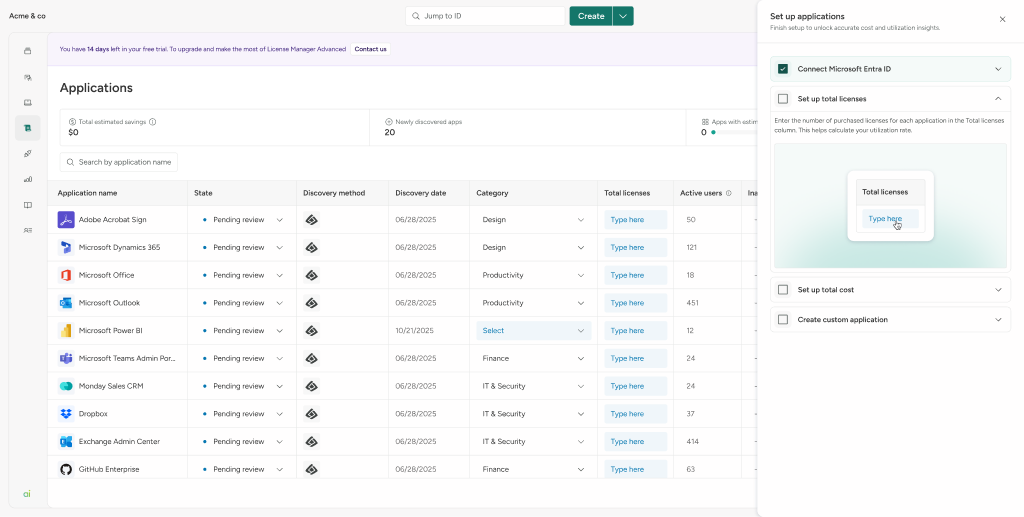

License Manager Advanced is a module within SysAid that discovers SaaS applications running across an organization and surfaces cost savings. The business wanted to introduce a PLS motion as a first step toward full PLG: a free 14-day trial that lets prospects evaluate the product autonomously, get to value on their own terms, and then make a qualified, informed call to sales. The immediate goal was self-serve evaluation, not self-serve conversion — but the longer-term direction is a fully product-led growth model.

The design problem was unusual: you can't interview trial users before they've used the product. There's no discovery phase with them. You design, you launch, and you watch what happens in the recordings.